VR Therapist [OpenAI]

Tags: Virtual Reality, Avatar, OpenAI, Mental health, Voice-to-text, Text-to-speech

Time : 2 days (2023)

Why

How

What

Next

References:

Softwares: Unity, Jetbrains Rider (C#), Revit

Tech Specification:

Tags: Virtual Reality, Avatar, OpenAI, Mental health, Voice-to-text, Text-to-speech

Time : 2 days (2023)

Why

-

ChatGPT is powerful but lacks presence; therapy benefits from embodiment and context.

- Goal: test whether a calm VR room and humanlike avatar increases willingness to talk.

How

-

Fake the hard parts to learn fast: amplitude-based mouth motion (not full lip-sync).

- Map chat to physical affordances (Record / Receive buttons) and an in-scene transcript.

What

-

Stack: Unity (Quest 2), XR Interaction Toolkit, Mixamo, OpenAI (Whisper + GPT).

-

Loop: Record → Transcribe (Whisper) → Reflect (GPT) → Show/Play reply → Continue.

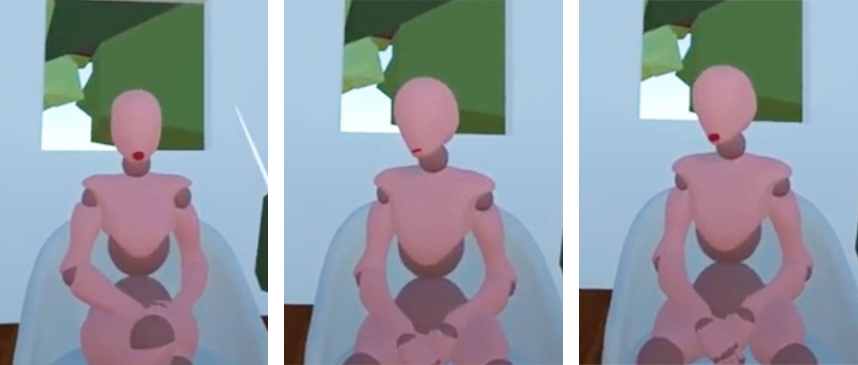

- Scene: Minimal, soft lighting; seated avatar with simple mouth motion.

Next

- Emotion-reactive room: simple rules first (color/light shift with sentiment).

- Frictionless voice loop: pre-selected mic, inline transcription, auto-advance turns.

- Embodied reply polish: basic viseme lip-sync + idle breathing/gaze; one calm TTS voice.

- Lightweight memory & safety: local session log + “not crisis care” link, optional save.

References:

- Text to Speech with AWS Polly in Unity

-

ChatGPT in Unity

-

Use Your Voice as Input in Unity

- Background music: Renda,David. “Tranquility.” Ambient Chill

Softwares: Unity, Jetbrains Rider (C#), Revit

Tech Specification:

-

Unity Engine [2021.3.23f1]

- XR Interaction Toolkiot [2.3.1]

- Oculus XR Plugin [3.3.0]

- Universal Render Pipeline [12.1.11]

- XR Plugin Management [4.3.3]

- OpenAI Unity [0.1.12] (including Whisper)

- AWS SDK for .NET [Standard 2.0 and 2.1]

Prototype Demo